There are often occasions where I have to make changes to parameters in the database some of which can only be made with scope=spfile as opposed to scope=both. The intention is to make this change now and pick it up on the next scheduled bounce of the RAC. Recently, a colleague had made such a change and we were waiting for the next opportunity to bounce the RAC. Unfortunately, the server supporting node 4 of the RAC crashed and Cluster Services attempted to restart node 4 after the server was back up. At this point we encountered the below error:

[srvrnode4-OMZPOAW4] srvctl start instance -d RACDB -i RACDB4 PRCR-1013 : Failed to start resource ora.racdb.db PRCR-1064 : Failed to start resource ora.racdb.db on node srvrnode4 CRS-5017: The resource action "ora.racdb.db start" encountered the following error: ORA-01105: mount is incompatible with mounts by other instances ORA-01677: standby file name conversion parameters differ from other instance . For details refer to "(:CLSN00107:)" in "/oracle/product/diag/crs/srvrnode4/crs/trace/crsd_oraagent_oracle.trc".

At this point, nodes 1-3 were running with a set of parameters in their memory which differed from the parameters that were in the spfile that node 4 was attempting to start with. We were then left with two options:

a. shutdown nodes 1-3 of the RAC and start all nodes together from the spfile

b. reset the spfile to the values that nodes 1-3 were running with and start node 4

Option a. was not possible as this would have impacted the users. Option b was a difficult choice because

01. we would undo the work we had done pending a bounce

02. since a few days had passed between the ALTER SYSTEM scope=spfile commands we were not 100% sure of the changes that had been made

We decided to back up the spfile to a pfile (with all the desired changes) and then start looking for difference between the pfile and the parameters in memory on nodes 1-3. For each of the differences, we issued an alter command with scope=spfile. When this was complete, we were able to start node 4 as the spfile now matched what was in the memory of nodes 1-3. Instead of going back to square one with the desired changes in the spfile pending a bounce, we changed the .profile to display the required commands as a reminder to issue them with the next bounce of the RAC.

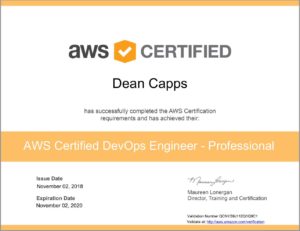

Very excited to have passed the

Very excited to have passed the